August 14, 2023 ☼ The Intersection ☼ Information Age ☼ Artificial Intelligence ☼ Tech Policy

The immediate and important task for AI policy is to govern the industry

India should prevent the concentration of market & technological power in the hands of a few

This is from The Intersection column that appears every other Monday in Mint.

This is from The Intersection column that appears every other Monday in Mint.

The manner in which the world’s big artificial intelligence (AI) companies are scaring the world’s governments and asking for regulation reminds me of how incumbent telcos used to push ‘Fear, Uncertainty and Doubt’ (FUD) 20 years ago. We should suspect that the motives are similar: to use regulation to slam the gates shut for new entrants and use incumbency to acquire greater power over public policy.

If OpenAI and Google are really worried that their products are dangerous and pose severe, unpredictable risks to public safety, they could stop developing them. It is reasonable, therefore, to suspect that their calls for regulation of so-called foundation models are partly motivated by the desire to lock-in their dominant market positions.

Even the narrative of the dangers and risks of generative AI takes us in direction determined by a few big companies in Silicon Valley and the intellectual and media eco-system they have promoted. So the world is concerned with the big question of what to do about the imminent arrival of an all-powerful artificial general intelligence that poses a challenge to human civilisation.

In response, a lot of intelligent people around the world are working on the alignment problem of how to ensure that intelligent machines do what is in the human interest. Some of the smartest minds in the US have polarised themselves into warring ‘AI safety’ and ‘AI ethics’ tribes, the former concerned with reducing harm and the latter with eliminating biases and discrimination. These issues are serious and grave, but they distract attention from more immediate questions of public policy.

The challenge before India, like for other countries, is how to govern the AI industry. The technology and products are already here, they are quite different from what we’ve seen before, and the industry structure is not properly defined. The longer the government takes to put in place a governance framework for the industry, the longer its first movers will have to establish their positions, norms and practices and present them as fait accompli.

What principles should India adopt to govern the AI industry? First, there is an overriding public interest in ensuring that this revolutionary new technology does not concentrate power in the hands of a few. A thoughtful CTO of one of India’s successful startups suggested to me that if AI is a superpower, then it is best if we make it available to everyone. This makes sense, because politics is about relative power. Distributing power among a large number of people, theoretically everyone, ensures that no one can use AI to dominate others. Yes, this means that bad people will use the power to create disinformation, spread hatred and commit crime. But there will be an overwhelmingly larger number of actors who will put the technology to good use, and society as a whole will be better equipped to counter bad actors.

Second, India should prefer open technologies over proprietary ones. In a January 2022 column, I had argued that “open-source software is in India’s national interest, given the unfolding economics and politics of the technology space… To attempt technological sovereignty by reinventing everything and insisting on localisation would be counter-productive. A far more effective approach is to focus on open-source projects, build for the whole planet and derive a strategic advantage.” The availability of open-source models, data-sets, digital standards and application programming interfaces (APIs) will not only lower the barriers for India’s technology companies to become globally competitive, but also make the technology widely affordable.

Third, being a labour-surplus economy, we should prefer AI systems that augment human capacity rather than substitute it. As Stanford University’s Erik Brynjolfsson points out, augmenting labour creates more value than automating it because it creates something new. An incentive structure that encourages the industry to work on augmentation can raise both employment and productivity (and thus real wages). Indeed, getting this right might be one of India’s most important short-term priorities, given AI’s potentially massive impact on jobs.

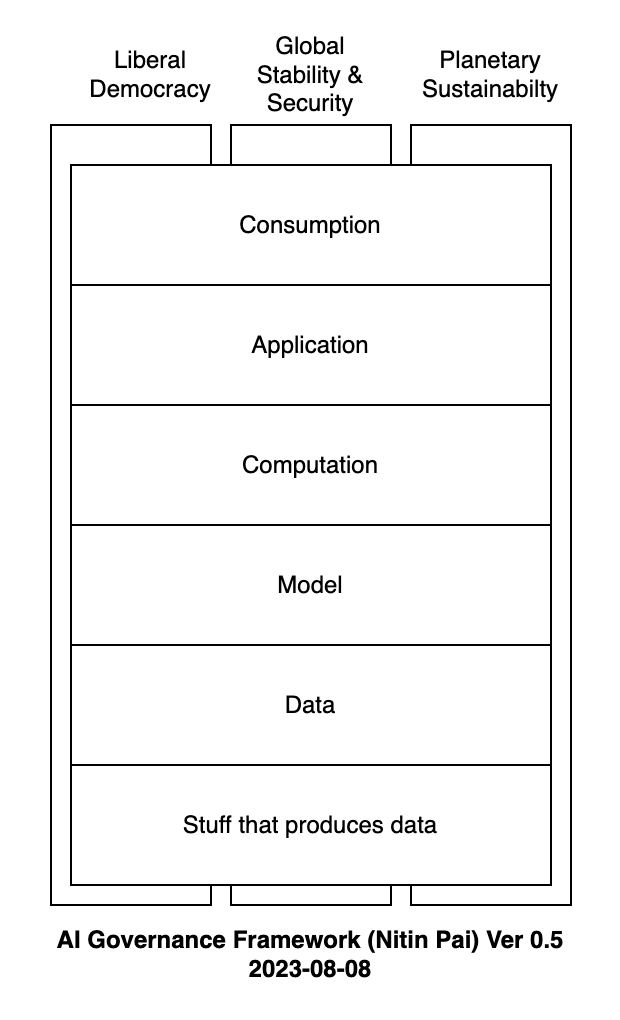

How could we translate these principles into a governance framework? The industry is still finding its shape and form, but I think it makes sense to see it stacked in four layers, from bottom to top: data, models, computation and application. The policy goal would be to ensure there is competition within each layer and prevent vertical integration across layers. This means requiring owners of data-sets to make data available to all models on the same commercial and technical terms; so too for models and computation providers. Policy wonks, lawyers and tech heads need to come together and figure out how to achieve such an outcome. These are the conversations that we should be having now, instead of worrying about a rogue AGI taking over the planet.

I think we have a moral imperative to exploit every opportunity to improve the life outcomes of our people. A lot of the policy discourse over generative AI is concentrated over its harms. But we should not succumb to FUD.

There are many more The Intersection columns here

Rajasthan gig worker welfare law needs improvement Next

Replace JEE with a lottery for IIT admissions

© Copyright 2003-2024. Nitin Pai. All Rights Reserved.